News

The Age of Augmentation - Part 3

A universe of new possibilities is rapidly evolving with generative AI.

With all the excitement about generative AI, it can be challenging to filter the signal from the noise and understand where the opportunities lie. We are clearly at an inflection point in how augmentation will change the nature of work and we wanted to provide some goal-posts on how the landscape is evolving and where startups can create durable value (refer to Part 1 and Part 2 for background).

Morgan Stanley predicts AI to create a $6+ trillion opportunity and substantial productivity gains and Goldman Sachs is stating 300M+ jobs worldwide could be affected by generative AI and it could add 7%to GDP (or almost $7 trillion) and a lift in productivity growth by 1.5% over a 10-year period. We are still early in the cycle, as the majority of desk workers haven’t changed their workflows or tool stack yet. This is already starting to rapidly change as every knowledge worker who performs a repetitive and/or skill-based task in front of a screen is beginning to leverage AI to create more as well as better output with less input. The first empirical study led by Erik Brynjolfsson on the productivity impact from generative AI in an enterprise environment with a Fortune 500 company demonstrated a 14% increase in productivity as well as happier workers.

Our findings reveal that around 80% of the U.S. workforce could have at least 10% of their work tasks affected by the introduction of LLMs, while approximately 19% of workers may see at least 50% of their tasks impacted.

- Working paper published March 27, 2023 by Open AI and University of Pennsylvania

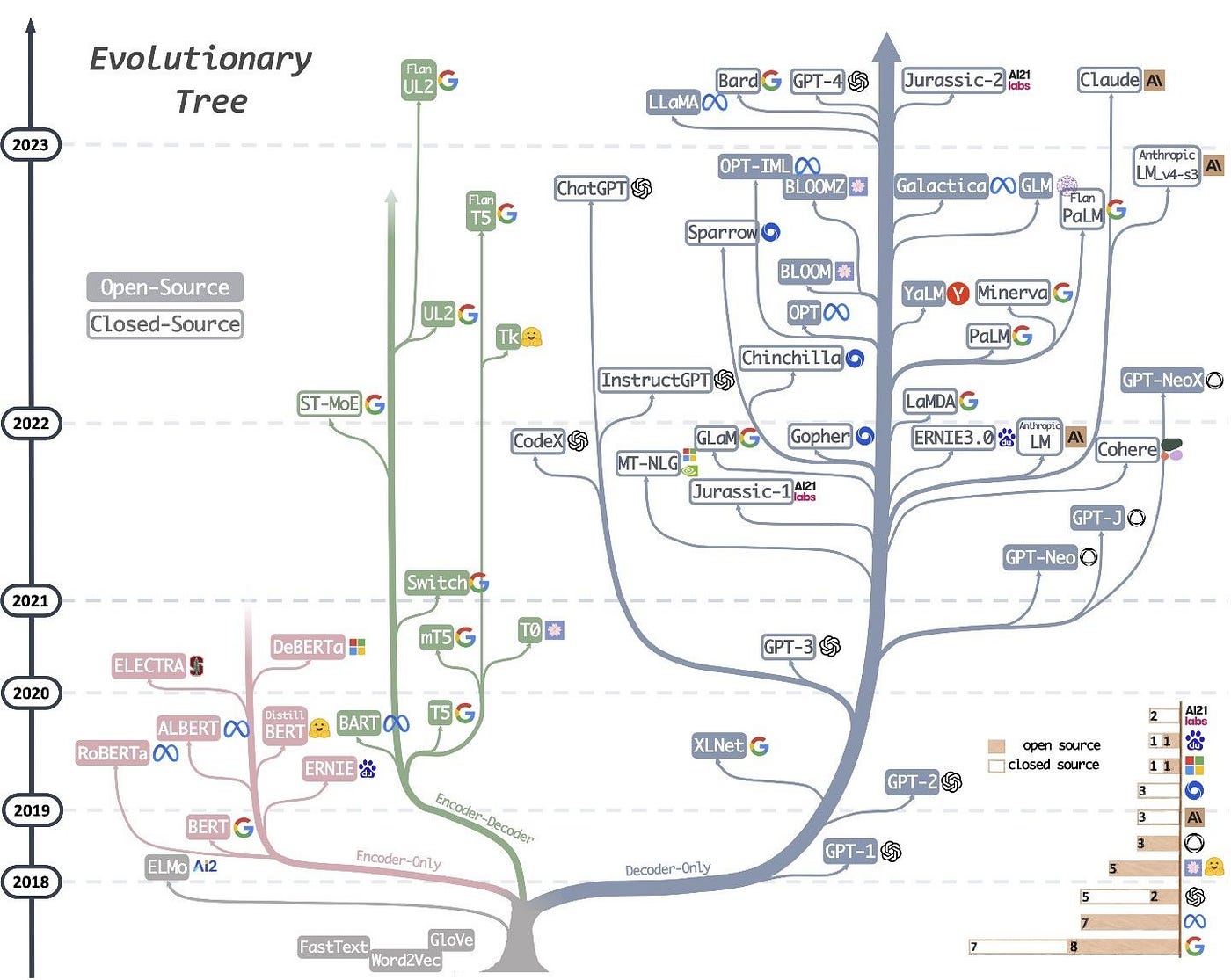

The current generative AI startup universe is driven by the democratization of foundation models or large language models (LLMs) – either through APIs or open-source models. The evolutionary tree of LLMs allows us to visualize and navigate the vast landscape in this Practical Guide for LLMs.

Source: Jingfeng Yang

While we are still in the early innings of scaling these foundation models, we are already seeing an explosion of opportunities emerging across the AI stack as we see fundamental disruption at both the application and infrastructure layers. As developers have access to the same underlying models, this is a field of fierce competition. Furthermore, the centralization of power at the foundation layer also creates vulnerabilities for application-layer startups. While it’s historically been highly expensive and complex to produce foundation models, there are endless opportunities to develop more specialized models and to bundle general capabilities into something that a particular target market needs. This is the equivalent of vertical SaaS, applied to AI. We will see a lot of AI-enabled SaaS plays that provide a holistic solution with great UX for a particular market. The goal is to reduce reliance on OpenAI’s models, better fine-tune the models for their specific use case, and keep the data generated by their models. Further down in the stack, providing the right kind of tooling and training data, enabling ML engineers to build specialized models quickly and assuring the robustness of models are all viable businesses.

A brief recap of key developments in the last few months.

With the blistering pace of product releases in AI leveraging LLMs, we’re seeing workflows that were the singular focus of a startup become cannibalized as new AI-native features are released by Microsoft and Google. OpenAI’s release of ChatGPT Plugins positioned itself as a platform and has been dubbed ChatGPT’s “iPhone moment”, meaning the bot is the operating system, and the plugins—including from Instacart, OpenTable, and Kayak—are the apps. In the short and medium term, there’s plenty of opportunity for all involved, however in the long term as companies build on it, the underlying AI may grow capable of replicating their capabilities, potentially rendering them obsolete.

We are also seeing the rise of a cohort of LLM startups that have raised mega rounds, such as Anthropic, Cohere and Stable Diffusion to fund compute and inferences cost to train their models and serve their customers to complete with Open AI and the other large tech companies like Google’s Bard. This is causing us to ponder what will the structure of the foundation model market look like in the long run? How consolidated will be it be? Who will win?

We saw AI Agents emerge with AutoGPT which lets GPT-4 chain multiple actions together to work like a human. The GitHub repo has demonstrated the fastest growth in history that eclipsed a decades old open-source project in 2 weeks. Agents can interpret natural language (capable of reasoning an input), connect to external knowledge bases (capable of connecting to your databases), and then leverage APIs to take action (sends an email or imports/loads a file). AutoGPT has now been connected to the internet and allowed to run autonomously. Expect AI agents to become increasingly proficient in solving more complex tasks.

The question has also been raised whether foundation models themselves are defensible. A team of Stanford students was able to take an open source LLM, LLaMA 7B, and fine-tune it on 52,000 instruction- from OpenAI’s text-davinvi-003. The resulting LLM, dubbed Alpaca 7B, can run on a MacBook and has roughly comparable performance to OpenAI’s GPT-3.5,which relies on massive cloud compute. It took OpenAI 4.5 years and over ~$1B raised to launch GPT-3 where Alpaca training costs totaled less than $600! With the leak of Meta’s LlaMA model in March and coder Georgi Gerganov’s quantization of the model, this enabled the shift in running LlaMA on expensive GPU hardware to a mere Macbook, which triggered incredible innovation.

This shift to open-source can be compared to Google’s Android open-source release in 2009 that led not only to a developer boom building billions of mobile applications but also enabled the software to run on any hardware device (HTC, Samsung, etc.). The leak of Google’s internal memo in May cautioned that the open-source free for all is threatening Big Tech’s grip on AI and that the tech giant does not have a competitive moat especially as its internal secrets are not so safeguarded with the consistent brain drain from the company to other competitors and startups.

Just this past Wednesday, with its dominance in search threatened, Google announced plans to infuse generative AI into its search engine to compete against Microsoft’s ChatGPT-powered search as the latest in the AI arms race.